Google is releasing an update to their Core Web Vitals metric, an important part of a website’s overall

SEO, in March 2024. In short, they’re replacing FID with INP. If that went over your head, don’t worry. We’ve put together a quick explainer free of code and light on acronyms that’ll help you better understand what’s on the horizon.

What are Core Web Vitals?

A couple years ago, Google introduced their Core Web Vitals (CWV) metric, a comprehensive measurement of how responsive, fluid, and generally usable a website is. This was a way for Google to reward quality websites with a higher ranking in their search results, while simultaneously penalizing those who underperform.

They focus on three primary aspects of the user experience:

- Loading, which is measured as

Largest Contentful Paint (LCP), calculates the time it takes for the largest element on the page to appear.

- Interactivity, which is measured as

First Input Delay (FID), calculates the delay between when the user first interacts with something and when the website actually responds to that click.

- Visual Stability, which is measured as

Cumulative Layout Shift (CLS), calculates how “jumpy” a web page is, or how much the content shifts during loading.

These are important metrics—and not only for SEO. Having a website that is both snappy and stable improves customer satisfaction and brand perception. Thankfully, this is where Duda is proudly leading the pack.

We leave other web builders in the dust when it comes to Core Web Vitals, and we don’t plan to let them catch up any time soon.

What is INP?

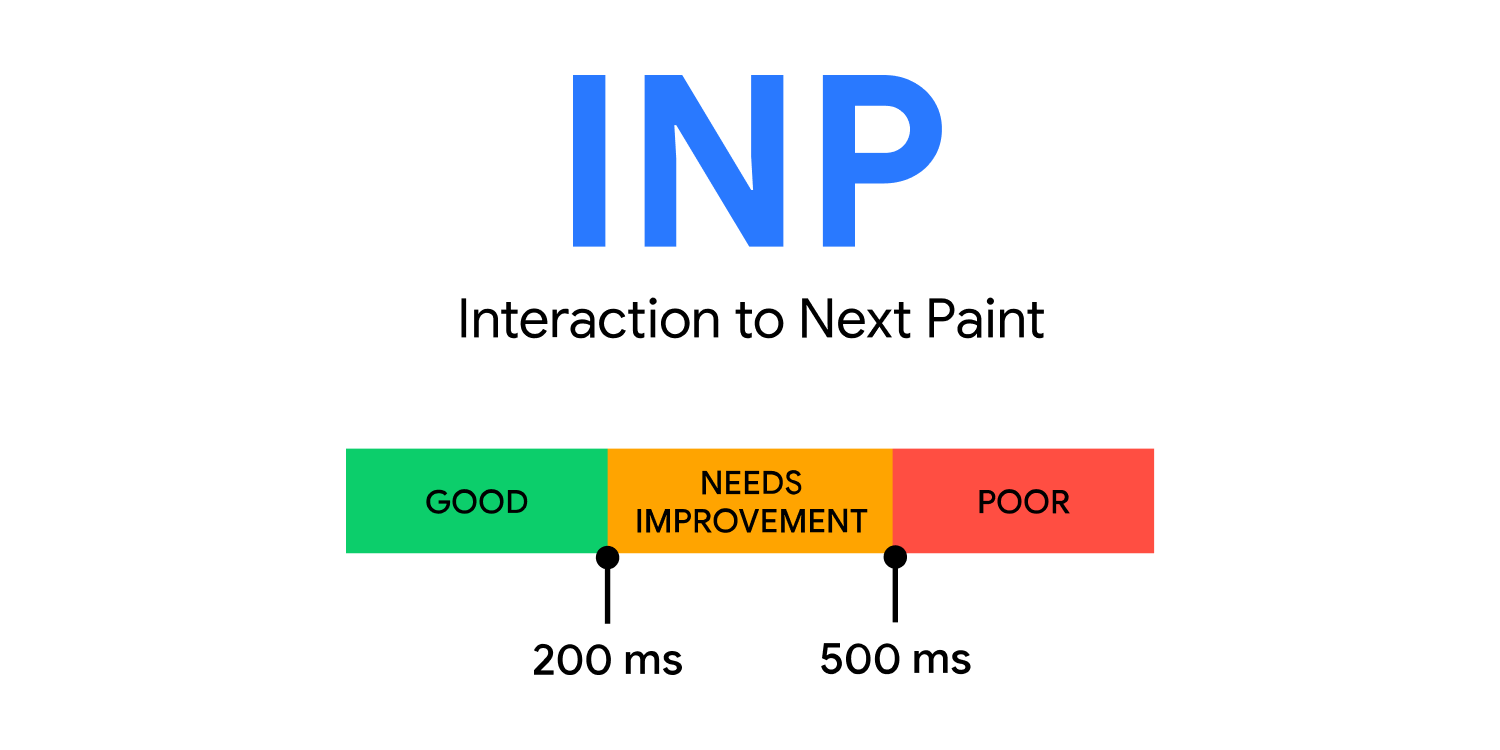

Interaction to Next Paint (INP) is the newest Core Web Vital that will be replacing First Input Delay (FID). Like FID, INP is (unsurprisingly) a measurement of interactivity.

Unlike FID, which measures the delay between the first time a user interacts with something and an action occurring, INP considers nearly all interactions and measures only the one that takes the longest.

What all of this means is that buttons, drop-down menus, videos, and virtually every other interactive element on your webpage needs to react quickly to a user’s input.

In this context, “quickly” may as well mean “instantly.” A website with a “good” INP score is reacting to a user’s input in under 200 milliseconds. After that, a response time between 200ms and 500ms “needs improvement” and a score above 500ms is considered “poor.”

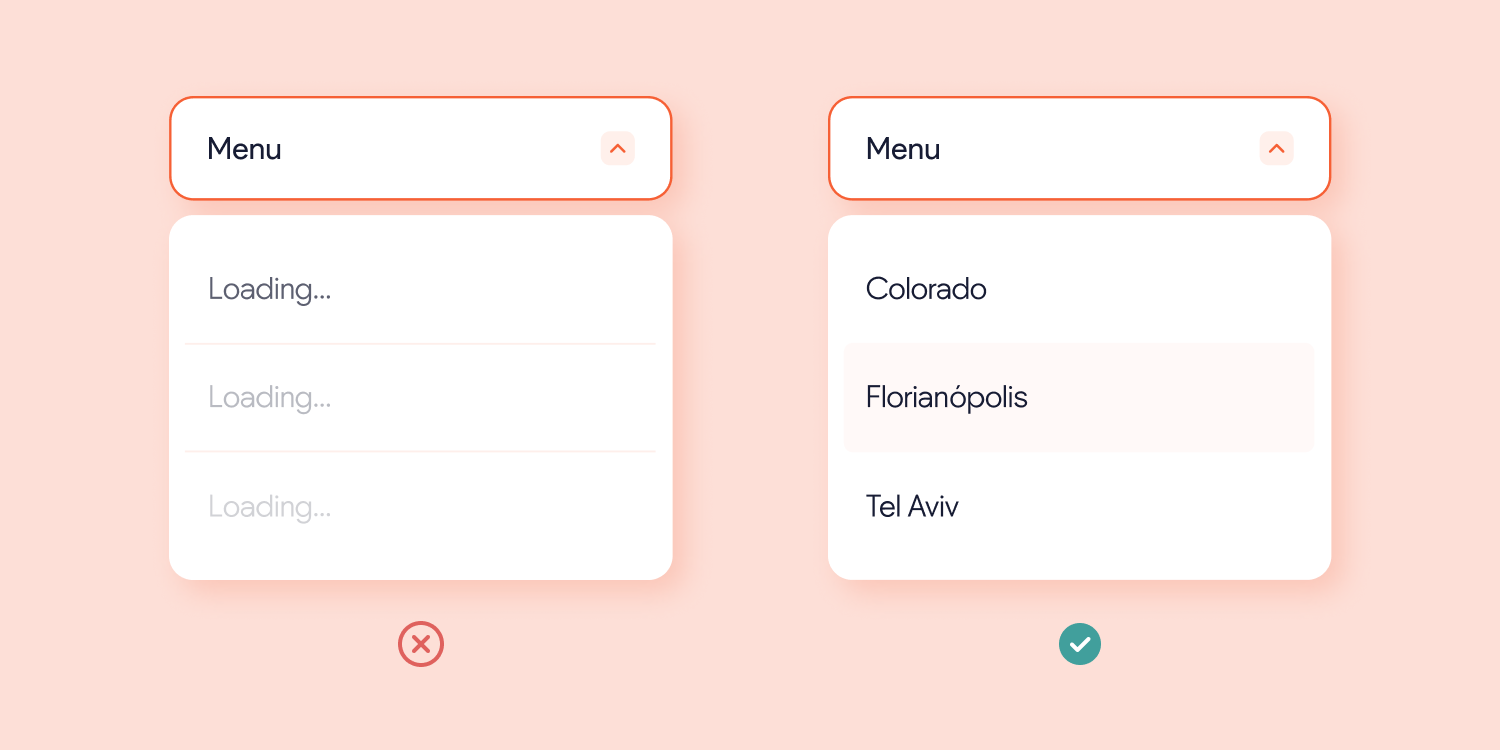

For example, pretend you’ve clicked on an arrow to open a drop-down menu on a website. Within 200ms, that menu should appear or at the very least the animation to show the menu should begin. Anything slower than that can appear buggy and create a poor experience for users on top of hurting your score.

Will my website be ready for the change?

If you’ve built your websites with Duda, rest assured that you can continue to expect the same great Core Web Vitals performance that you’re used to. As of writing, about 82% of all websites built on Duda are already within the “good” range for the new Interaction to Next Paint (INP) metric.

Of course, Core Web Vitals are an area where we are continuously improving. Between now and March 2024 it is likely that the number of pages performing “good” will continue to grow.

For developers, this is not an easy metric to optimize for. Since the measurement considers nearly all page interactions, it is difficult to test artificially using Google Chrome’s Lighthouse tool. For a rough idea,

Google’s Search Console and the

Page Speed Insights tool are two great places to start. For “in the lab” testing, take a peek at

this handy guide.

Thankfully, there are plenty of great resources available to help improve a struggling INP performance. Our very own

“Optimize Your Sites for Google's Core Web Vitals” Duda University course contains excellent advice surrounding best practices to improve scoring. Additionally, the folks at

web.dev have written a very helpful article for developers looking to improve the performance of their code.

Related Articles: