SaaS leaders are constantly innovating, building features, and refining our products to meet the needs of customers. But no matter how forward-thinking or feature-rich your product is, if customers struggle to use it effectively, its value is significantly diminished.

Identifying and resolving customer friction points—the moments where customers feel confused, frustrated, or blocked in achieving their goals—is absolutely essential to delivering a truly exceptional user experience.

Uncovering these friction points takes more than intuition. It requires a combination of targeted strategies and tools that allow you to observe, understand, and ultimately fix the obstacles standing in the way of your customers.

In this post, we’ll walk through some of the most effective methods for identifying friction points and how we use them at Duda.

1. Do a click test for a simple–but effective–gut check

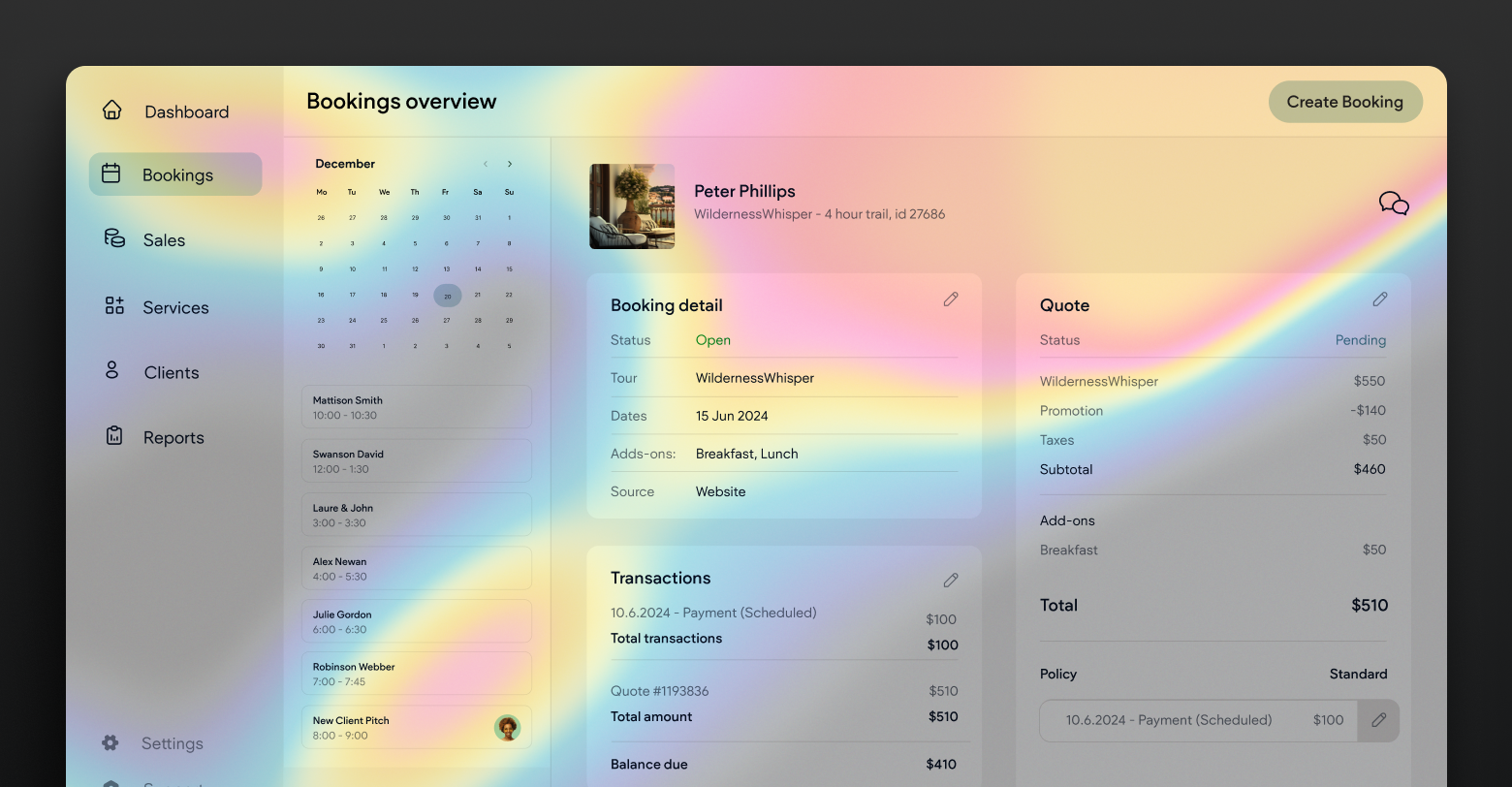

One of the simplest yet most revealing methods for identifying friction is the click test. The click test asks: how many clicks does it take to complete a basic task in the platform?

Your absolute core functions, like adding a new prospect to a relationship management system, should be front and center. Ideally, this requires one or two clicks. Other basic functions should follow a similar philosophy and require as few clicks as possible.

You can do this test with customers, recruit members of your team that aren’t in the product every day, or try it for yourself.

First, take a few minutes and write down what are the most important actions a person using your product needs to take. The goal here isn’t to come up with a lot of functions, but to really focus on the most fundamental and important tasks your customers will need to do each and every day.

Let’s take a look at an example: Duda is a website building platform, so these are a few core actions our customers need to be able to take quickly with as little friction as possible:

- Start a new site from scratch

- Start a new site with AI

- Start a new site with an existing template

- Add a client

- Add a team member

Great! Now we have a list of core functions.

What we’re going to do now is take it for a spin. From the first screen when a person logs in to the platform, how many clicks does it take to perform each one of these functions?

Here’s our score:

- Start a new site from scratch - 1 click

- Start a new site with AI - 1 click

- Start a new site with an existing template - 1 click

- Add a client - 2 clicks

- Add a team member - 2 clicks

If you need to click >3 times to do any basic function, you’re losing people.

Now you know exactly where your biggest friction points are. Prioritize those first.

If it takes 1 or 2 clicks for each basic function, you’re doing great!

Now you can dig a little bit deeper. Make a list of your more advanced functions and repeat the click test again.

It’s deceptively simple, but the click test is a great gut check to help you quickly find and resolve glaring friction points, but there are many more strategies you can employ to dig deeper into how people use your product and what’s holding them back from wholeheartedly adopting your platform.

Let’s take a look at some additional strategies…

2. Observe your entire customer journey with usability testing

Usability testing

involves observing people completing full workflows within your product. This method gives you a broader view of your in-product customer behavior and uncovers deeper friction points that might not be visible in a single interaction.

In a usability test, you might ask customers to complete a task that involves multiple steps—like building a website from start to finish using your platform. Observing them step by step through this journey allows you to identify pain points that affect the overall process, such as confusing instructions, difficult navigation, or overly complex steps.

3. Interview your customers about their in-product experience

While tests and analytics provide valuable insights, nothing beats direct feedback from your customers. Customer interviews allow you to dive deep into the experiences, frustrations, and needs of your customers in their own words. These conversations can reveal pain points that wouldn’t be apparent through testing alone.

Focus on open-ended, but specific questions like:

- "Can you walk me through a recent experience with [specific feature]?"

- "What, if anything, felt more difficult than you expected?"

- "What would make this feature easier for you to use?"

By listening to the specific challenges they face, you can prioritize product changes that will have the greatest impact. For example, you might learn that customers find your integrations hard to navigate, even though they are technically functional.

4. Use behavioral analysis to understand user actions at scale

In addition to qualitative feedback, behavioral analytics provides a quantitative layer that helps you understand how customers interact with your product on a broader scale.

Tools like heatmaps, session recordings, and funnel analysis offer insights into how users navigate your product and where they face obstacles.

- Heatmaps can show where customers are clicking the most, and where they’re not interacting at all. If a key call-to-action is being ignored, it may indicate that the placement, design, or copy isn’t effective.

- Session recordings allow you to watch real-time customer interactions, showing you exactly where they get stuck.

- Funnel analysis helps you track where customers are dropping off in their journeys, helping you identify bottlenecks that cause friction, like during sign-up or task completion.

5. Mine your support tickets for pain points

Your customer support team is often the first to hear about struggles and issues, so it's crucial to mine this resource regularly. Review support tickets, chat transcripts, and feedback forms to spot recurring themes and patterns. This will give you valuable insight into friction points and allows you to prioritize issues that impact a large number of customers.

6. Create multiple avenues for customers to share feedback

At Duda, we’ve also developed tools like our

Idea Board, where customers can submit requests for specific features or improvements and our community can vote on these ideas. This helps us gauge which features or issues are most important to our community, and which suggestions would be the most impactful.

Additionally, our

Facebook Community is another great source of real-time feedback. We actively monitor discussions to identify pain points and gather suggestions on how we can make our platform easier to use and prioritize the features our customers care about most.

7. Use AI to discover patterns and prioritize improvements

With the volume of feedback and data coming in from all these sources, it can be overwhelming to track and prioritize improvements. That’s where AI comes in.

Use AI-powered tools to analyze large datasets, including feedback from your support tickets, usability tests, and customer interviews. AI can help you quickly identify patterns in the data, highlighting recurring issues or feature requests that require attention.

For example, AI can quickly identify if a specific feature is mentioned repeatedly in support tickets or feedback forms. It can rank these issues based on sentiment, frequency, and potential impact, allowing us to prioritize which friction points to address first.

Final thoughts

The key to building a successful product isn’t just about adding more features—it’s about ensuring those features are easy and enjoyable for your customers to use. By actively seeking out friction points and addressing them, you’ll create a product that your customers truly love.

At Duda, we’ve built a strong feedback loop by combining qualitative insights, behavioral data, and AI to drive continuous improvement. The result is a product that’s more intuitive, customer-friendly, and effective—ultimately leading to a more loyal, engaged user base.