From the variety of ways to measure your website’s performance and user experience, Google Lighthouse and Core Web Vitals stand out as two of the most well-known. This article takes a deep dive into comparing the two approaches to help you understand what they are, how they differ, and when to use them.

Download Your Lighthouse VS Core Web Vitals Cheatsheet >>

Lighthouse and Core Web Vitals: What Are They?

Lighthouse is a Google tool that audits the performance, accessibility, SEO, and other best practice quality indicators of your web pages from within a fixed test environment. Based on the audits and checks it carries out, Lighthouse generates a report that you can use to assess performance and improve your web pages.

The Lighthouse testing environment simulates what it would be like to visit your website on a slower 3G Internet connection using a mobile device. Globally, more than 90 percent of the world’s population has access to a mobile broadband network, however, 8.5 percent of that figure still uses 3G networks. This speed constraint makes any on-site issues that slow pages down stand out while also reflecting real-world conditions for many users visiting your site.

Lighthouse also uses a fixed CPU speed to simulate the experience of visiting your website. The current device used is a

Moto G4, which is not as powerful as today’s top-tier smartphones. The use of a slightly underpowered CPU in the testing environment reflects the fact that many visitors to your site use older mobile devices.

Core Web Vitals are a set of three metrics that attempt to measure and summarize the overall user experience of visiting your web pages. These metrics use field data, which contrasts with the controlled testing environment simulated by the Lighthouse tool. This field data is real-world data anonymously sent to Google from actual users when they visit a specific page.

It’s important to understand that

Core Web Vitals became a Google ranking factor in August 2021. The score you get on these metrics can make a difference in how prominently you appear within Google’s own search engine results pages for your targeted search queries.

Core Web Vitals are not limited to raw speed — here are the three specific areas of user experience that they cover:

- Loading time: How long does it take for the page to finish loading?

- Interactivity: How quickly does your page respond after the user’s first interaction with it?

- Visual Stability: How stable is your web page within users’ browsers?

Pro tip:

You should rely on Core Web Vitals data as much as possible to assess performance and user experience because it’s real-world aggregated data, not point-in-time data based on a controlled set of lab conditions.

Download Your Lighthouse VS Core Web Vitals Cheatsheet >>

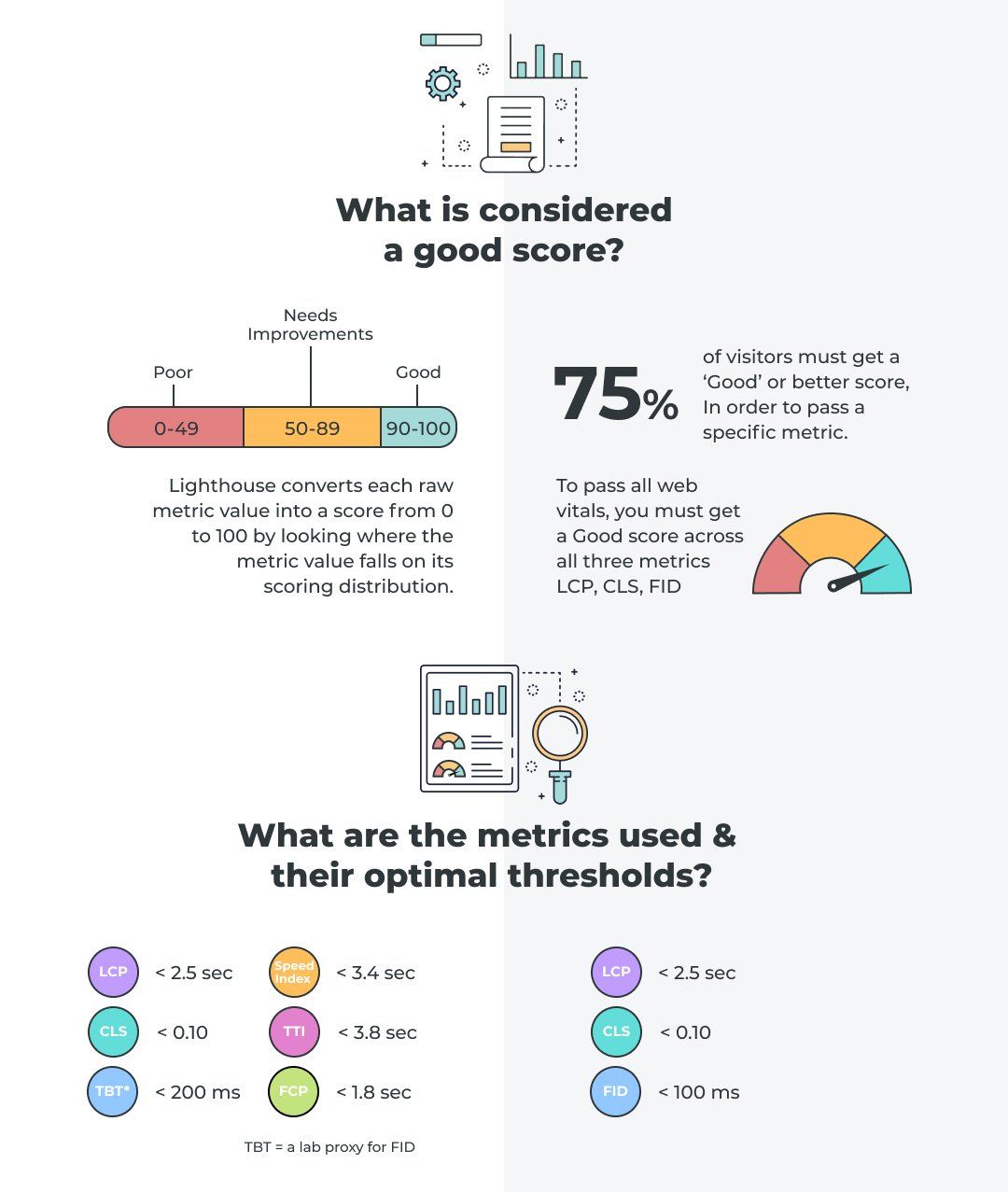

Lighthouse and Core Web Vitals: Metrics and Optimal Thresholds

So, what are the actual metrics (and their optimal thresholds) used within these two different approaches to measuring performance and user experience?

Lighthouse

The Lighthouse Performance report uses six different metrics as follows:

1) Largest Contentful Paint (LCP): a measure of your page’s loading time that checks how long it takes for the largest above the fold element (image, text, etc) to load. Above the fold means that the metric only considers what appears on the page without scrolling down.

Optimal threshold: Less than 2.5 seconds

2) Cumulative Layout Shift (CLS):

measures the visual stability of a page load by focusing on unexpected layout shifts not caused by a user interaction. The actual CLS calculation multiplies together two measures of movement: impact fraction and distance fraction. The lower the calculated score, the better visual stability your page has.

Sometimes when a page loads, elements shift around unexpectedly and frustrate users. For example, you might load a page and start reading a paragraph, only for an image to load that pushes the paragraph down the page. Specifying image dimensions is one way to improve your CLS scores (among several other ways).

Optimal threshold: Less than 0.1

3) Total Blocking Time (TBT):

measures the total amount of time that a page is blocked from responding to user input. These inputs include mouse clicks or keyboard strokes.

Optimal threshold: Less than 200 milliseconds

4) First Contentful Paint (FCP):

this is the time taken until the first piece of content loads on the page. The content must come from the page’s DOM (Document Object Model). The DOM includes standard page content like images and text.

Optimal threshold: Less than 1.8 seconds

5) Speed Index:

measures the entire loading process for the visual parts of a web page by capturing a video of the page loading and checking the difference between frames. The total duration essentially measures how long it takes to go from blank screen to complete page.

Optimal threshold: Less than 3.4 seconds

6) TIme to Interactive: measures how long the page takes to become fully interactive so that it reliably and rapidly responds to user inputs.

Optimal threshold: Less than 0.1

Core Web Vitals

Two of the three Core Web Vital metrics are the same LCP and CLS measures as Lighthouse uses (and they have the same optimal thresholds). The third metric in Core Web Vitals is First Input Delay (FID), which measures the interactivity and responsiveness of a page. The calculation takes the time from the user’s first interaction, such as clicking a button, until the browser can start responding to that interaction.

Remember, Lighthouse uses simulated lab data to generate reports while Core Web Vitals scores are based on real user data. FID is only measurable using real-world data because it depends on an actual user’s action. Lighthouse uses the Total Blocking Time metric as a proxy for FID’s value.

Download Your Lighthouse VS Core Web Vitals Cheatsheet >>

Lighthouse and Core Web Vitals: Performance Scoring

Lighthouse

As you can see from the Lighthouse scoring calculator, the report uses a weighted average calculation to provide you with a total Performance score. The LCP and TBT metrics are particularly heavily weighted in this calculation.

By looking at the distribution of all metric scores across every test performed, Lighthouse gauges where your particular page falls in that distribution. This enables Lighthouse to convert raw metric scores into a standard 0-100 value. A good Lighthouse score for any individual performance metric or for the total weighted average performance score is anything above 90.

Core Web Vitals

The real-world focus of Core Web Vitals makes the performance scoring a bit simpler than Lighthouse. For you to score well in any of the three metrics, you must get a “Good” score in that metric for 75 percent of visitors to your page. If you score “Good” on all three metrics, you pass the Core Web Vitals assessment. The definition of “Good” is that a metric’s score falls within the previously outlined optimal threshold.

The scores are calculated using aggregated field data from many users over a 28-day period. This 28-day period is important to bear in mind because if you score badly and then make some improvements, you’ll need to wait a while for Google to pick those improvements up.

Download Your Lighthouse VS Core Web Vitals Cheatsheet >>

Lighthouse and Core Web Vitals: Performance Scoring

Lighthouse

If you want to get a Lighthouse report, the most user-friendly ways are to use the Lighthouse extension for your Chrome web browser or to simply visit

Google's Web Dev Portal and enter your URL. Other options include Chrome Dev Tools, Page Speed Insights, and using the command line.

Core Web Vitals

A number of tools measure Core Web Vitals, including Chrome User Experience

Report (cRUX), Google Search Console, and PageSpeed Insights. The quickest and simplest way to check any URL’s Core Web Vitals is through PageSpeed Insights.

Duda’s Core Web Vital Achievements

Among all website builders, Duda leads the way in ensuring our users’ websites score consistently well on Core Web Vitals. Given that Core Web Vitals are a ranking factor in Google search results, it’s important that your website or your clients’ websites score well in these metrics. Good Core Web Vitals scores are also vital from a conversion standpoint — customers prefer to do business with sites that provide a solid performance and strong user experience.

Since Core Web Vitals became a ranking factor, Duda leads the way with the highest proportion of sites on our platform scoring “Good” versus competitors such as WordPress, Drupal, Wix, and Squarespace. Some competitors (no names mentioned) omit Duda from their Data Studio comparison graphs in an attempt to make it look like they are industry leaders. Pro tip: if you want the full picture, visit this Google Data Studio link and see how all platforms perform against each other.

Download Your Lighthouse VS Core Web Vitals Cheatsheet >>